前言

我们现在将深入问答管道,看看如何利用偏移量从上下文中抓取手头问题的答案,就像我们在上一节中对分组实体所做的那样。然后,我们将了解如何处理被截断的非常长的上下文。如果你对问答任务不感兴趣,可以跳过这一节。

src link: https://huggingface.co/learn/nlp-course/chapter6/3b

Operating System: Ubuntu 22.04.4 LTS

参考文档

使用问答管道

正如我们在第一章中看到的,我们可以使用这种问答流程来获取问题的答案。

from transformers import pipeline

question_answerer = pipeline("question-answering")

context = """

🤗 Transformers is backed by the three most popular deep learning libraries — Jax, PyTorch, and TensorFlow — with a seamless integration

between them. It's straightforward to train your models with one before loading them for inference with the other.

"""

question = "Which deep learning libraries back 🤗 Transformers?"

question_answerer(question=question, context=context){'score': 0.97773,

'start': 78,

'end': 105,

'answer': 'Jax, PyTorch and TensorFlow'}与其他管道不同,它不能截断和分割超过模型接受的最大长度的文本(因此可能会错过文档末尾的信息),这个管道可以处理非常长的上下文,并且即使答案在末尾也会返回问题的答案。

long_context = """

🤗 Transformers: State of the Art NLP

🤗 Transformers provides thousands of pretrained models to perform tasks on texts such as classification, information extraction,

question answering, summarization, translation, text generation and more in over 100 languages.

Its aim is to make cutting-edge NLP easier to use for everyone.

🤗 Transformers provides APIs to quickly download and use those pretrained models on a given text, fine-tune them on your own datasets and

then share them with the community on our model hub. At the same time, each python module defining an architecture is fully standalone and

can be modified to enable quick research experiments.

Why should I use transformers?

1. Easy-to-use state-of-the-art models:

- High performance on NLU and NLG tasks.

- Low barrier to entry for educators and practitioners.

- Few user-facing abstractions with just three classes to learn.

- A unified API for using all our pretrained models.

- Lower compute costs, smaller carbon footprint:

2. Researchers can share trained models instead of always retraining.

- Practitioners can reduce compute time and production costs.

- Dozens of architectures with over 10,000 pretrained models, some in more than 100 languages.

3. Choose the right framework for every part of a model's lifetime:

- Train state-of-the-art models in 3 lines of code.

- Move a single model between TF2.0/PyTorch frameworks at will.

- Seamlessly pick the right framework for training, evaluation and production.

4. Easily customize a model or an example to your needs:

- We provide examples for each architecture to reproduce the results published by its original authors.

- Model internals are exposed as consistently as possible.

- Model files can be used independently of the library for quick experiments.

🤗 Transformers is backed by the three most popular deep learning libraries — Jax, PyTorch and TensorFlow — with a seamless integration

between them. It's straightforward to train your models with one before loading them for inference with the other.

"""

question_answerer(question=question, context=long_context){'score': 0.97149,

'start': 1892,

'end': 1919,

'answer': 'Jax, PyTorch and TensorFlow'}让我们看看它是如何做到这一切的!

使用模型进行问答

与任何其他管道一样,我们首先对输入进行分词,然后将其发送到模型中。问答管道默认使用的检查点是 distilbert-base-cased-distilled-squad(名称中的“squad”来自模型微调的数据集;我们将在第7章中更详细地讨论SQuAD数据集)。

from transformers import AutoTokenizer, AutoModelForQuestionAnswering

model_checkpoint = "distilbert-base-cased-distilled-squad"

tokenizer = AutoTokenizer.from_pretrained(model_checkpoint)

model = AutoModelForQuestionAnswering.from_pretrained(model_checkpoint)

inputs = tokenizer(question, context, return_tensors="pt")

outputs = model(**inputs)请注意,我们将问题和上下文作为一对进行分词,首先是问题。

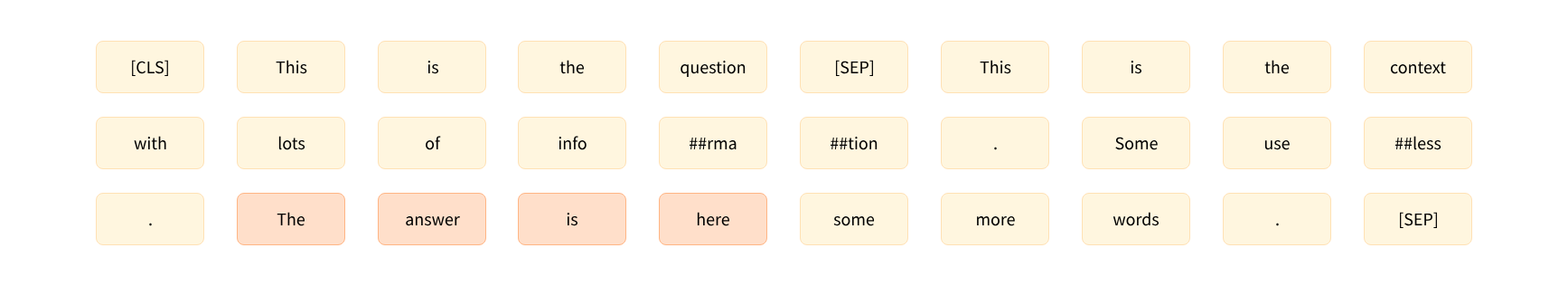

问答模型的工作方式与我们至今为止看到的模型略有不同。以上图为例,该模型经过训练能够预测答案开始(此处为21)和结束(此处为24)的标记的索引。这就是为什么这些模型不返回一个张量,而是返回两个张量:一个对应于答案开始标记的logits,另一个对应于答案结束标记的logits。在这种情况下,我们只有一个包含66个标记的输入,因此我们得到:

start_logits = outputs.start_logits

end_logits = outputs.end_logits

print(start_logits.shape, end_logits.shape)torch.Size([1, 66]) torch.Size([1, 66])为了将那些logits转换为概率,我们将应用一个softmax函数——但在那之前,我们需要确保我们屏蔽了不属于上下文的索引。我们的输入是[CLS]问题[SEP]上下文[SEP],所以我们需要屏蔽问题中的标记以及[SEP]标记。然而,我们将保留[CLS]标记,因为有些模型用它来表示答案不在上下文中。

由于我们随后将应用softmax,我们只需要将想要屏蔽的logits替换为一个大的负数。在这里,我们使用-10000:

import torch

sequence_ids = inputs.sequence_ids()

# Mask everything apart from the tokens of the context

mask = [i != 1 for i in sequence_ids]

# Unmask the [CLS] token

mask[0] = False

mask = torch.tensor(mask)[None]

start_logits[mask] = -10000

end_logits[mask] = -10000现在我们已经正确地屏蔽了我们不想预测的位置对应的logits,我们可以应用softmax了:

start_probabilities = torch.nn.functional.softmax(start_logits, dim=-1)[0]

end_probabilities = torch.nn.functional.softmax(end_logits, dim=-1)[0]在这一点上,我们可以取开始和结束概率的argmax——但我们可能会得到一个开始索引大于结束索引的结果,所以我们需要采取更多的预防措施。我们将计算每个可能的start_index和end_index的概率,其中start_index <= end_index,然后取概率最高的(start_index, end_index)元组。

假设事件“The answer starts at start_index”(答案从start_index开始)和“The answer ends at end_index”(答案在end_index结束)是独立的,那么答案从start_index开始并在end_index结束的概率是:

$\mathrm{start_probabilities}[\mathrm{start_index}] \times \mathrm{end_probabilities}[\mathrm{end_index}]$

因此,为了计算所有得分,我们只需要计算所有产品的start_probabilities[start_index]×end_probabilities[end_index],其中start_index <= end_index。

首先,让我们计算所有可能的产品:

scores = start_probabilities[:, None] * end_probabilities[None, :]然后,我们将通过将它们设置为0来屏蔽start_index > end_index的值(其他概率都是正数)。torch.triu()函数返回作为参数传递的2D张量的上三角部分,因此它将为我们执行这种屏蔽:

scores = torch.triu(scores)现在我们只需要得到最大值的索引。由于PyTorch将在扁平化的张量中返回索引,我们需要使用地板除法//和模数%运算来得到start_index和end_index:

max_index = scores.argmax().item()

start_index = max_index // scores.shape[1]

end_index = max_index % scores.shape[1]

print(scores[start_index, end_index])我们还没有完全完成,但至少我们已经有了答案的正确分数(你可以通过将其与前一部分的第一个结果进行比较来检查):

0.97773✏️ 尝试一下!计算五个最可能的答案的开始和结束索引。

我们有了答案在令牌中的开始索引(start_index)和结束索引(end_index),现在只需要将这些索引转换成上下文中的字符索引。这时偏移量就会非常有用。我们可以获取这些偏移量,并像在令牌分类任务中那样使用它们:

inputs_with_offsets = tokenizer(question, context, return_offsets_mapping=True)

offsets = inputs_with_offsets["offset_mapping"]

start_char, _ = offsets[start_index]

_, end_char = offsets[end_index]

answer = context[start_char:end_char]现在我们只需要格式化所有内容以获得我们的结果:

result = {

"answer": answer,

"start": start_char,

"end": end_char,

"score": scores[start_index, end_index],

}

print(result){'answer': 'Jax, PyTorch and TensorFlow',

'start': 78,

'end': 105,

'score': 0.97773}太好了!这与我们的第一个示例相同!

试一试!使用你之前计算的最佳得分来展示最可能的五个答案。为了检查你的结果,回到第一个pipeline,并在调用时传入 top_k=5。

处理长上下文

如果我们尝试对之前用作例子的长上下文和问题进行分词,我们会得到超过问题回答流程中使用的最大长度(384个令牌)的令牌数量:

inputs = tokenizer(question, long_context)

print(len(inputs["input_ids"]))461因此,我们需要将输入截断到这个最大长度。我们可以用几种方法来做这件事,但我们不想截断问题,只想截断上下文。由于上下文是第二个句子,我们将使用“only_second”截断策略。然后出现的问题是,问题的答案可能不在截断的上下文中。例如,我们挑选了一个问题,其答案位于上下文的末尾,当我们截断它时,这个答案就不存在了:

inputs = tokenizer(question, long_context, max_length=384, truncation="only_second")

print(tokenizer.decode(inputs["input_ids"]))"""

[CLS] Which deep learning libraries back [UNK] Transformers? [SEP] [UNK] Transformers : State of the Art NLP

[UNK] Transformers provides thousands of pretrained models to perform tasks on texts such as classification, information extraction,

question answering, summarization, translation, text generation and more in over 100 languages.

Its aim is to make cutting-edge NLP easier to use for everyone.

[UNK] Transformers provides APIs to quickly download and use those pretrained models on a given text, fine-tune them on your own datasets and

then share them with the community on our model hub. At the same time, each python module defining an architecture is fully standalone and

can be modified to enable quick research experiments.

Why should I use transformers?

1. Easy-to-use state-of-the-art models:

- High performance on NLU and NLG tasks.

- Low barrier to entry for educators and practitioners.

- Few user-facing abstractions with just three classes to learn.

- A unified API for using all our pretrained models.

- Lower compute costs, smaller carbon footprint:

2. Researchers can share trained models instead of always retraining.

- Practitioners can reduce compute time and production costs.

- Dozens of architectures with over 10,000 pretrained models, some in more than 100 languages.

3. Choose the right framework for every part of a model's lifetime:

- Train state-of-the-art models in 3 lines of code.

- Move a single model between TF2.0/PyTorch frameworks at will.

- Seamlessly pick the right framework for training, evaluation and production.

4. Easily customize a model or an example to your needs:

- We provide examples for each architecture to reproduce the results published by its original authors.

- Model internal [SEP]

"""这意味着模型将难以选择正确的答案。为了解决这个问题,问题回答流程允许我们将上下文分成更小的块,并指定最大长度。为了确保我们不恰好在上文错误的地方分割,使找到答案成为可能,它还在块之间包含一些重叠。

我们可以通过添加 return_overflowing_tokens=True 来让分词器(快速或慢速)为我们执行此操作,并且我们可以使用 stride 参数指定我们想要的重叠。这里有一个例子,使用了较短的句子:

sentence = "This sentence is not too long but we are going to split it anyway."

inputs = tokenizer(

sentence, truncation=True, return_overflowing_tokens=True, max_length=6, stride=2

)

for ids in inputs["input_ids"]:

print(tokenizer.decode(ids))'[CLS] This sentence is not [SEP]'

'[CLS] is not too long [SEP]'

'[CLS] too long but we [SEP]'

'[CLS] but we are going [SEP]'

'[CLS] are going to split [SEP]'

'[CLS] to split it anyway [SEP]'

'[CLS] it anyway. [SEP]'正如我们所看到的,句子已经被分成块,以便 inputs[“input_ids”] 中的每个条目最多有 6 个令牌(我们需要添加填充,使最后一个条目与其他条目的大小相同),并且每个条目之间有 2 个令牌的重叠。

让我们更仔细地看看分词的结果:

print(inputs.keys())dict_keys(['input_ids', 'attention_mask', 'overflow_to_sample_mapping'])正如预期的那样,我们得到了输入 ID 和一个注意力掩码。最后一个键,overflow_to_sample_mapping,是一个映射,告诉我们每个结果对应于哪个句子——在这里,我们有 7 个结果,都来自于我们传递给分词器的(唯一的)句子:

print(inputs["overflow_to_sample_mapping"])[0, 0, 0, 0, 0, 0, 0]这在当我们一起对几个句子进行分词时更有用。例如,像这样:

sentences = [

"This sentence is not too long but we are going to split it anyway.",

"This sentence is shorter but will still get split.",

]

inputs = tokenizer(

sentences, truncation=True, return_overflowing_tokens=True, max_length=6, stride=2

)

print(inputs["overflow_to_sample_mapping"])得到我们:

[0, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1]这意味着第一个句子像之前一样被分成 7 个块,接下来的 4 个块来自第二个句子。

现在让我们回到我们的长上下文。正如我们之前提到的,默认情况下,问题回答流程使用最大长度 384,以及步长 128,这与模型的微调方式相对应(你可以在调用流程时通过传递 max_seq_len 和 stride 参数来调整这些参数)。因此,我们在分词时将使用这些参数。我们还会添加填充(以使样本具有相同的长度,这样我们就可以构建张量),并请求偏移量:

inputs = tokenizer(

question,

long_context,

stride=128,

max_length=384,

padding="longest",

truncation="only_second",

return_overflowing_tokens=True,

return_offsets_mapping=True,

)这些输入将包含模型期望的输入 ID 和注意力掩码,以及我们刚才讨论的偏移量和 overflow_to_sample_mapping。由于这两个参数不是模型使用的参数,我们将它们从输入中删除(并且我们不存储映射,因为在这里没有用处),然后将其转换为张量:

_ = inputs.pop("overflow_to_sample_mapping")

offsets = inputs.pop("offset_mapping")

inputs = inputs.convert_to_tensors("pt")

print(inputs["input_ids"].shape)torch.Size([2, 384])我们的长上下文被分成了两部分,这意味着在通过模型处理后,我们将得到两套开始和结束的 logits:

outputs = model(**inputs)

start_logits = outputs.start_logits

end_logits = outputs.end_logits

print(start_logits.shape, end_logits.shape)torch.Size([2, 384]) torch.Size([2, 384])像之前一样,我们在对 tokens 取 softmax 之前,首先屏蔽掉不属于上下文的 tokens。我们还屏蔽了所有的填充 tokens(由注意力掩码标记):

sequence_ids = inputs.sequence_ids()

# Mask everything apart from the tokens of the context

mask = [i != 1 for i in sequence_ids]

# Unmask the [CLS] token

mask[0] = False

# Mask all the [PAD] tokens

mask = torch.logical_or(torch.tensor(mask)[None], (inputs["attention_mask"] == 0))

start_logits[mask] = -10000

end_logits[mask] = -10000然后我们可以使用 softmax 将我们的 logits 转换为概率:

start_probabilities = torch.nn.functional.softmax(start_logits, dim=-1)

end_probabilities = torch.nn.functional.softmax(end_logits, dim=-1)下一步与我们对小上下文所做的类似,但我们需要对这两个块中的每一个都重复这个过程。我们为所有可能的答案跨度分配一个分数,然后选择分数最高的跨度:

candidates = []

for start_probs, end_probs in zip(start_probabilities, end_probabilities):

scores = start_probs[:, None] * end_probs[None, :]

idx = torch.triu(scores).argmax().item()

start_idx = idx // scores.shape[1]

end_idx = idx % scores.shape[1]

score = scores[start_idx, end_idx].item()

candidates.append((start_idx, end_idx, score))

print(candidates)[(0, 18, 0.33867), (173, 184, 0.97149)]这两个候选答案对应于模型能够在每个块中找到的最佳答案。模型更有信心确信正确答案在第二部分(这是一个好兆头!)。现在我们只需要将这两个 token 跨度映射到上下文中的字符跨度(我们只需要映射第二个跨度就能得到答案,但看看模型在第一块中选择了什么也是很有趣的)。

✏️ 试一试!调整上面的代码,以返回五个最可能的答案的分数和跨度(总共,不是每个块的)。

我们之前获取的偏移量实际上是一个偏移量列表,每个文本块都有一个列表:

for candidate, offset in zip(candidates, offsets):

start_token, end_token, score = candidate

start_char, _ = offset[start_token]

_, end_char = offset[end_token]

answer = long_context[start_char:end_char]

result = {"answer": answer, "start": start_char, "end": end_char, "score": score}

print(result){'answer': '\n🤗 Transformers: State of the Art NLP', 'start': 0, 'end': 37, 'score': 0.33867}

{'answer': 'Jax, PyTorch and TensorFlow', 'start': 1892, 'end': 1919, 'score': 0.97149}如果我们忽略第一个结果,我们会得到与这个长上下文的流程相同的结果——太好了!

✏️ 试一试!使用你之前计算的最佳得分来展示整个上下文中最可能的五个答案(不是每个块)。为了检查你的结果,回到第一个pipeline,并在调用时传入 top_k=5。

这结束了我们对分词器功能的深入探讨。在下一章中,当我们展示如何在一系列常见的 NLP 任务中对模型进行微调时,我们将会再次实践所有这些内容。

结语

第二百五十四篇博文写完,开心!!!!

今天,也是充满希望的一天。